RESEARCH PROGRAM

Title: Applying Human-Centered Design to Complex Extended Reality Environments

Name: M.Sc. Vera Marie Memmesheimer

E-Mail: memmesheimer@cs.uni-kl.de

Phone: +49 (0) 631 205 2642

Project description:

Starting Situation

Extended Reality (XR) technologies allow to visualize detailed physical models in entirely virtual environments or as virtual augmentations of real-world scenes. These environments hold considerable potential for the time- and cost-efficient analysis of manufacturing systems and processes: virtual objects and simulations could be adapted according to altered parameters of the models such that effects of different parameter combinations could be analyzed collaboratively. This group of collaborators is usually heterogeneous: the people involved may come from different scientific or industrial fields, have different expertise with XR technologies, and can be located at different sites. In XR, collaborators could be provided with a customized access to a joint space. To this end, devices incorporating virtual objects to different extents such as handheld displays, head-mounted displays, and projection-based technologies could be combined with secondary displays of mobile devices and various input modalities based on gestures, gaze, or speech. While in theory, this combination of different XR devices, visualization and interaction techniques appears very promising, practical applications of such joint XR spaces are still very rare.

Approach

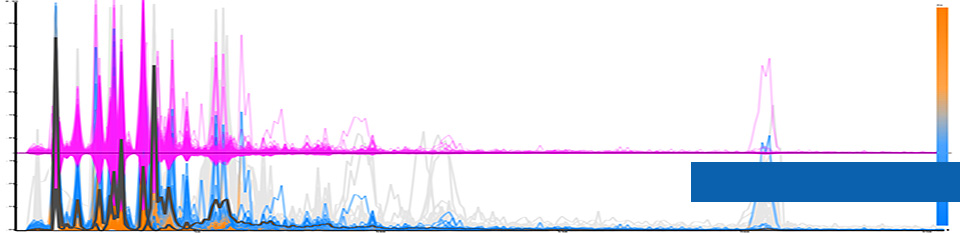

In line with the human-centered design paradigm, we will focus on both the development and evaluation of collaborative XR environments. As a first step, we will investigate the compatibility of existing technologies and assess their suitability for real-world tasks. Since many of today’s evaluation tools restrict studies to artificial use cases in laboratory settings, we will also consider advanced evaluation approaches such as the collection of physiological and behavioral data. Based on these insights, we propose to develop visualization and interaction techniques that scale with an increasing number of collaborators, the different XR technologies, and input modalities such that collaborators can choose how to access the joint space according to their individual preferences. To support the analysis of manufacturing processes in completely virtual environments as well as via augmentations of partly existing processes, the techniques should further scale across the different degrees of virtuality (i.e., from Augmented Reality to Virtual Reality).

Expected Results

The aim of this research project is to develop and evaluate scalable visualization and interaction techniques for collaborative XR environments that increase ease of use due to enhanced learnability and memorability. In order to take full advantage of the technological variety offered in XR, the compatibility of different devices, tasks, users, visualization and interaction techniques will be assessed with advanced evaluation tools. These findings will contribute to the development of an XR framework and design guidelines. As such, the collaborative analysis of manufacturing systems and processes will be supported in co-located as well as in distributed XR settings.